I am a Senior Research Scientist at Google DeepMind working on world models. Before that, I was a postdoc at Meta AI (FAIR) working on 3D perception for robotics. My recent research focus is on generative world models that can be used for reasoning and planning across long horizons. I'm interested in ways to make world models that are real-time and consistent by leveraging efficient abstractions including 3D.

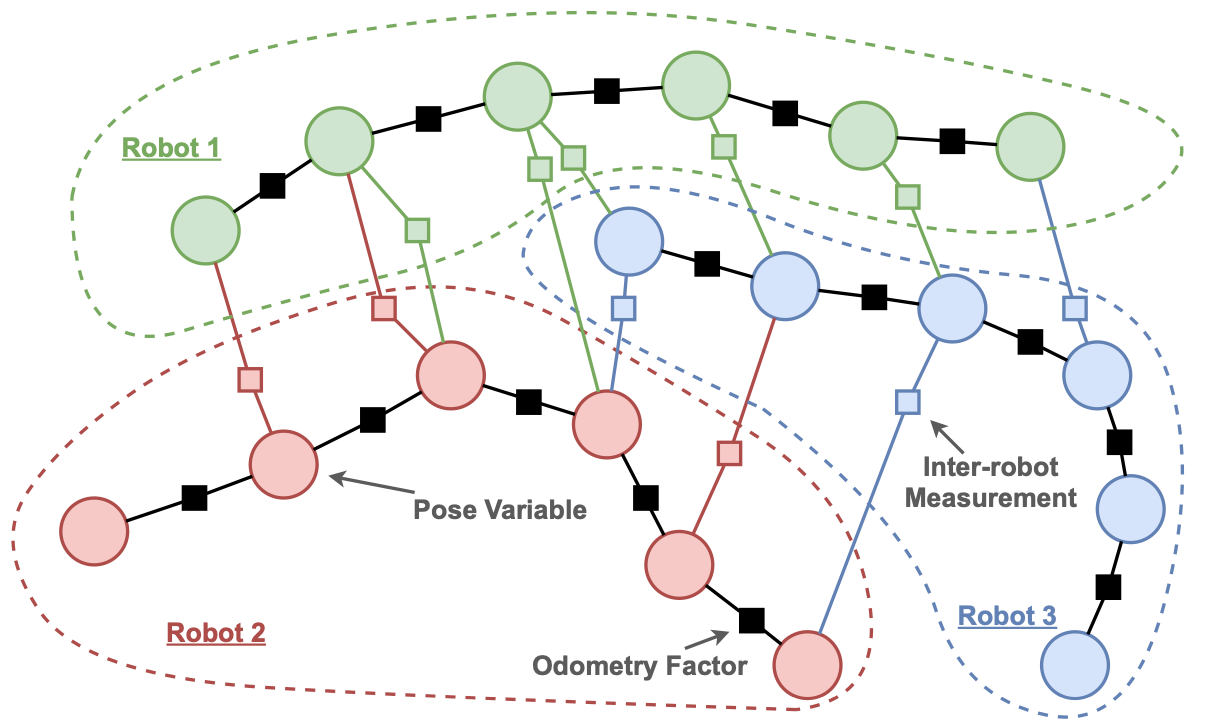

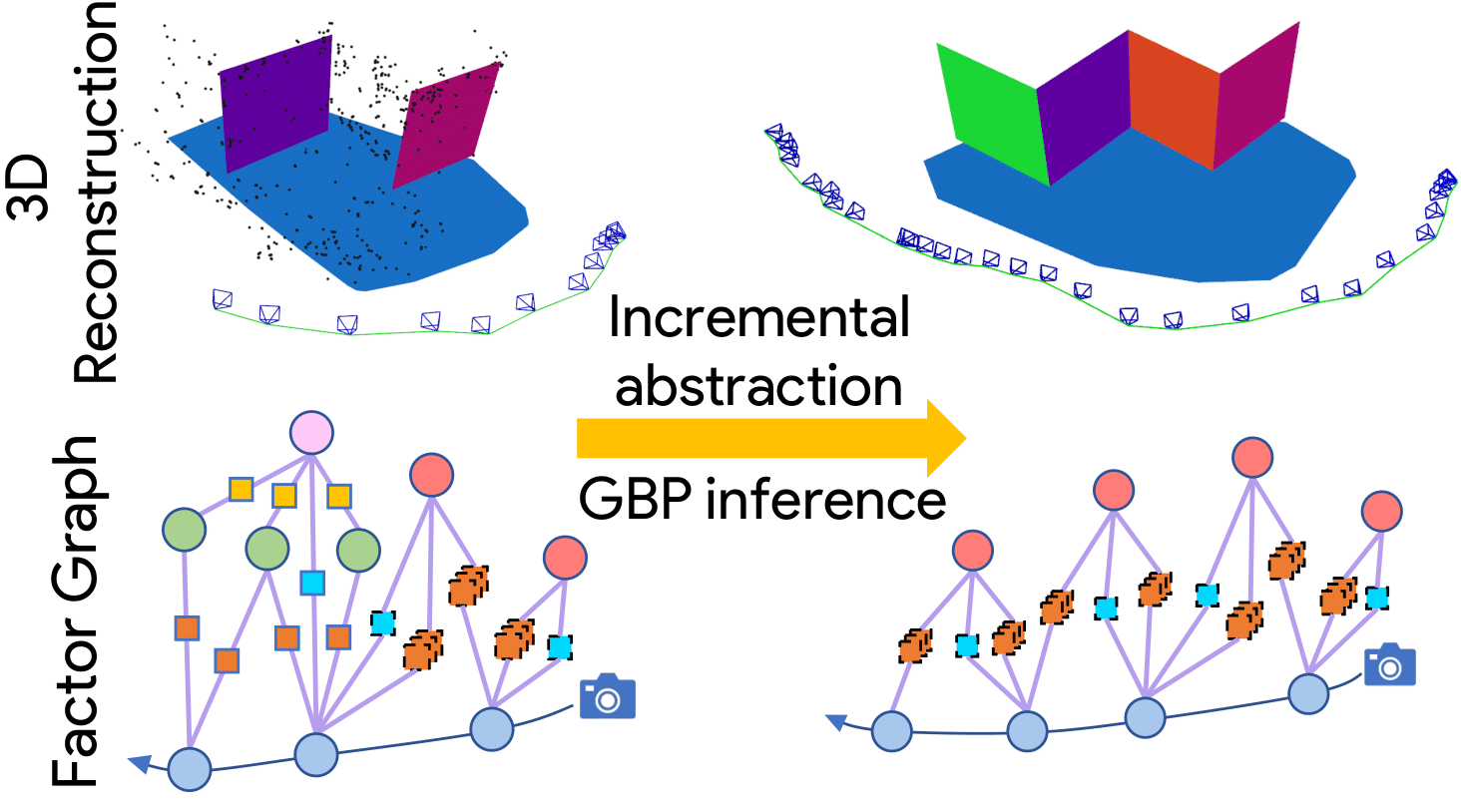

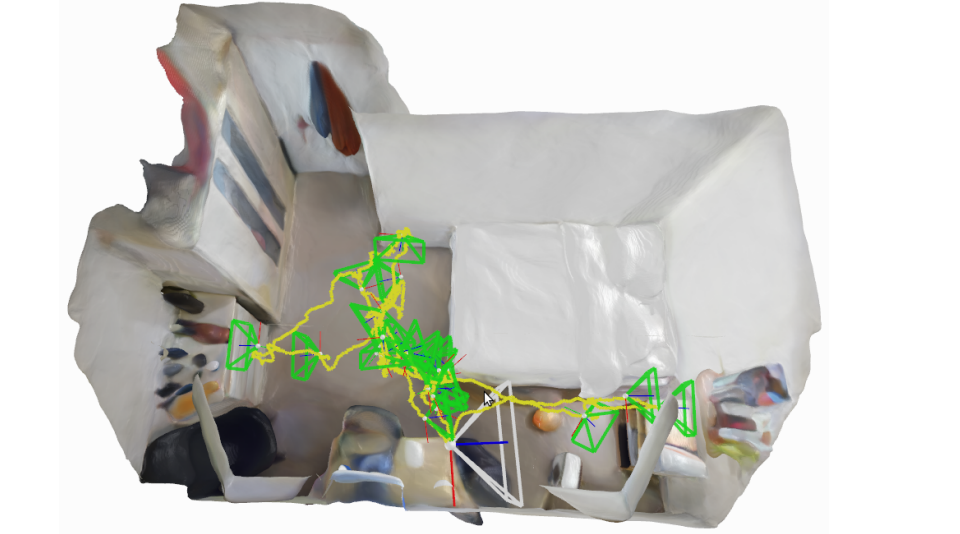

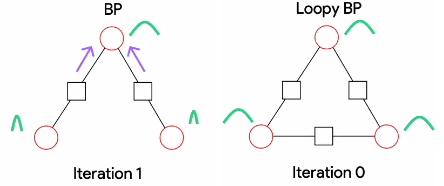

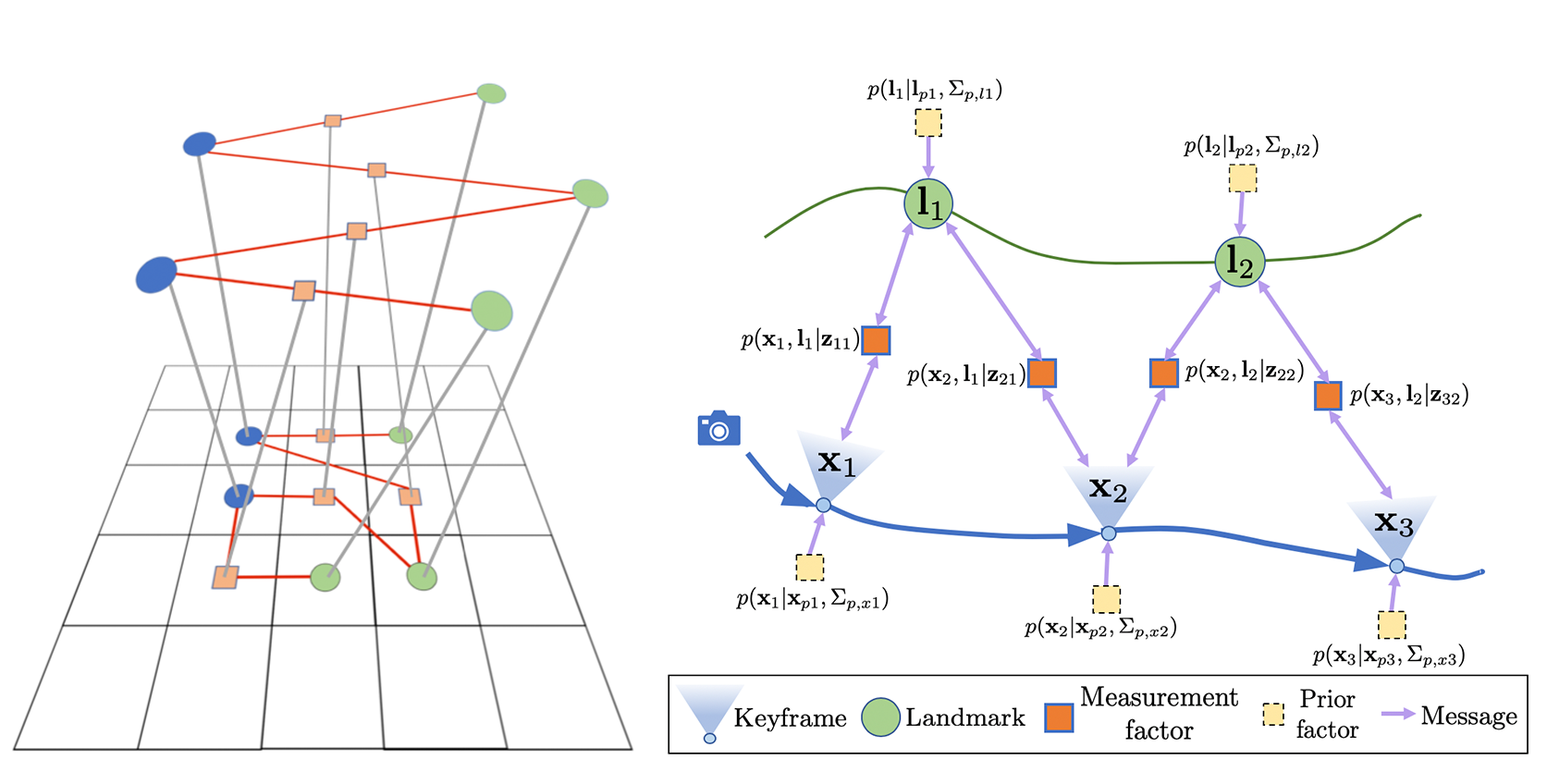

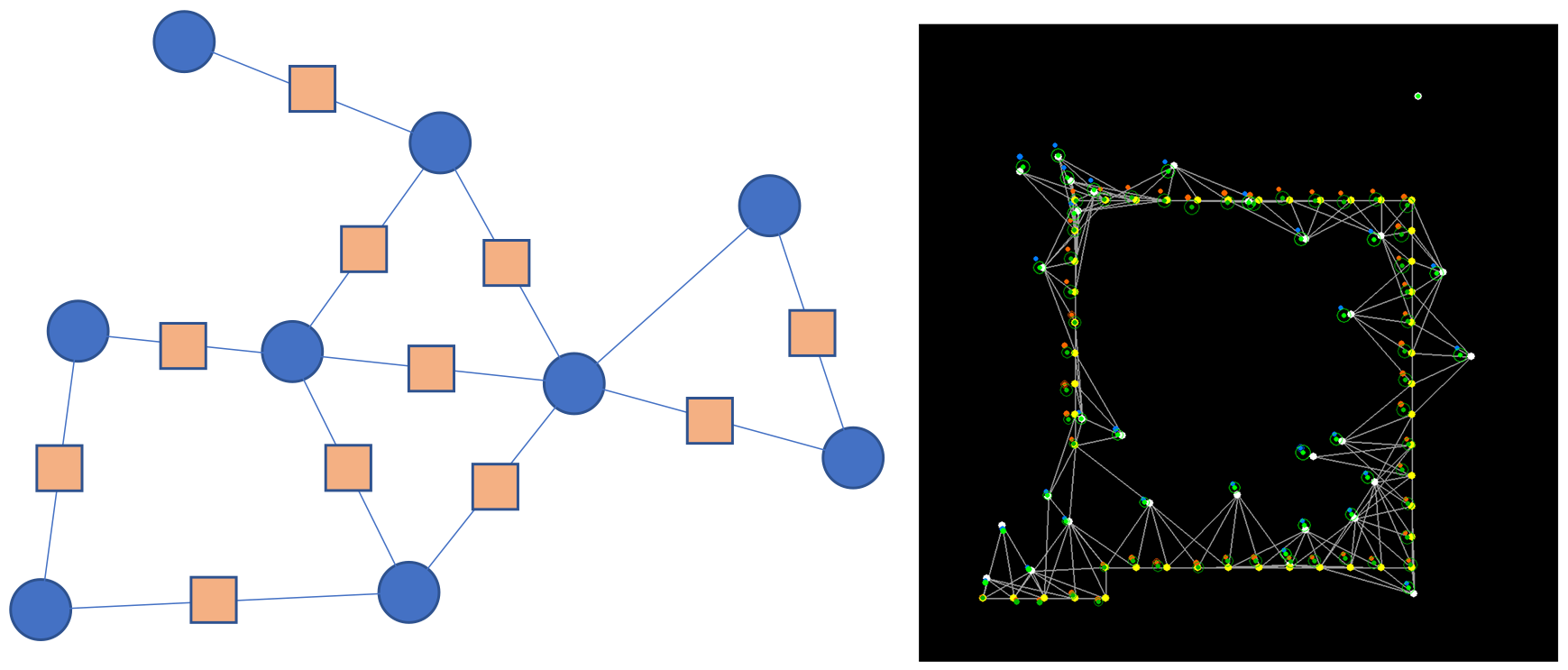

I graduated from my PhD at Imperial College London in 2023, advised by Prof. Andrew Davison. There my work focused on 1) graphical representations and distributed inference algorithms on graphs, and 2) training neural scene representations via continual learning for real-time robotics. I completed my undergraduate and Masters in Physics at the University of Oxford.